Why You Are Probably Right About Everything

In the popular 1999 scary movie “Sixth Sense,” one of the most chilling lines is spoken by a young kid, Cole, who can see ghosts. He’s explaining to Bruce Willis’ character that ghosts don’t know that they themselves are ghosts. (Spoiler alert: Bruce Willis is a ghost and doesn’t realize it the whole movie). Cole explains that this is because the ghosts “only see what they want to see.” The ghosts are unaware of their ethereal state, because of the simple fact that they don’t want to know it. They want to believe that they are still alive.

In modern society, and I suspect throughout all of human history, we also only “see what we want to see.”

This is particularly evident in politics. To one person, Hilary Clinton may seem like the perfect next president. To others, she seems like the devil incarnate. In the last election, it was so incredibly obvious to some that Mitt Romney would be the perfect president with his business background. But somehow, more than half of the country disagreed.

How does this happen? Well, we only see what we want to see. Conservatives watch Fox News and read all about the illegalities of Hilary Clinton and her lack of experience in the private sector. Democrats watch CNN and read daily about the stiffness of Mitt Romney and the insanity of Donald Trump. People, almost without fail, will subscribe to the information that supports their views. We hear what we want to hear. We pass over information that doesn’t support our views, ironically dismissing it as biased or untrustworthy.

In the popular 1999 scary movie “Sixth Sense,” one of the most chilling lines is spoken by a young kid, Cole, who can see ghosts. He’s explaining to Bruce Willis’ character that ghosts don’t know that they themselves are ghosts. (Spoiler alert: Bruce Willis is a ghost and doesn’t realize it the whole movie). Cole explains that this is because the ghosts “only see what they want to see.” The ghosts are unaware of their ethereal state, because of the simple fact that they don’t want to know it. They want to believe that they are still alive.

In modern society, and I suspect throughout all of human history, we also only “see what we want to see.”

This is particularly evident in politics. To one person, Hilary Clinton may seem like the perfect next president. To others, she seems like the devil incarnate. In the last election, it was so incredibly obvious to some that Mitt Romney would be the perfect president with his business background. But somehow, more than half of the country disagreed.

How does this happen? Well, we only see what we want to see. Conservatives watch Fox News and read all about the illegalities of Hilary Clinton and her lack of experience in the private sector. Democrats watch CNN and read daily about the stiffness of Mitt Romney and the insanity of Donald Trump. People, almost without fail, will subscribe to the information that supports their views. We hear what we want to hear. We pass over information that doesn’t support our views, ironically dismissing it as biased or untrustworthy.

Because of this habit, people are actually quite unchanging. People change their political stance maybe once or twice in their life. Their core beliefs on politics and policies are inflexible, since they are continually fed with subjective information, subconsciously selected to support their views. It’s ironic that most politicians and political parties can’t do a single thing to change 90% of American’s minds, no matter how well they debate or how much money they spend on the campaign trail.

And it extends far beyond politics. If I’m a health enthusiast who believes in fish oil, I’m going to find, click on, read, share, and believe articles that tell me that fish oil prevents cancer, creates beautiful skin, and increases brain function. I’m buying the fish oil, so I’m going to choose to believe that it’s worth my money.

Because of this habit, people are actually quite unchanging. People change their political stance maybe once or twice in their life. Their core beliefs on politics and policies are inflexible, since they are continually fed with subjective information, subconsciously selected to support their views. It’s ironic that most politicians and political parties can’t do a single thing to change 90% of American’s minds, no matter how well they debate or how much money they spend on the campaign trail.

And it extends far beyond politics. If I’m a health enthusiast who believes in fish oil, I’m going to find, click on, read, share, and believe articles that tell me that fish oil prevents cancer, creates beautiful skin, and increases brain function. I’m buying the fish oil, so I’m going to choose to believe that it’s worth my money.

If I buy a shake weight to build my biceps, I’m going to read success stories (with little research about if they’re real or not), on how people used the shake weight to build their own biceps. Because here’s the thing: I don’t want to look like a fool, and I just spent $30 on a shake weight, gosh darn it. And if I’m wrong, I’ll by definition be a fool for buying it.

I’d rather be right, and as I hope you’re picking up on, it’s not hard to convince yourself that you are right, regardless of the illusive truth.

Turns out this is a proven phenomenon called the confirmation bias, “the tendency to search for, interpret, favor, and recall information in a way that confirms one’s beliefs or hypotheses while giving disproportionately less attention to information that contradicts it.” This bias leads to false memories, belief perseverance (holding on to things even in the face of strong evidence against them), illusory correlation (linking unrelated events together), and attitude polarization (two people in complete disagreement even in the face of the same pile of evidence). People tend to confirm their existing beliefs and to test ideas in one-sided ways in order to decrease the possibility of being wrong. You can read all about it in depth on Wikipedia.

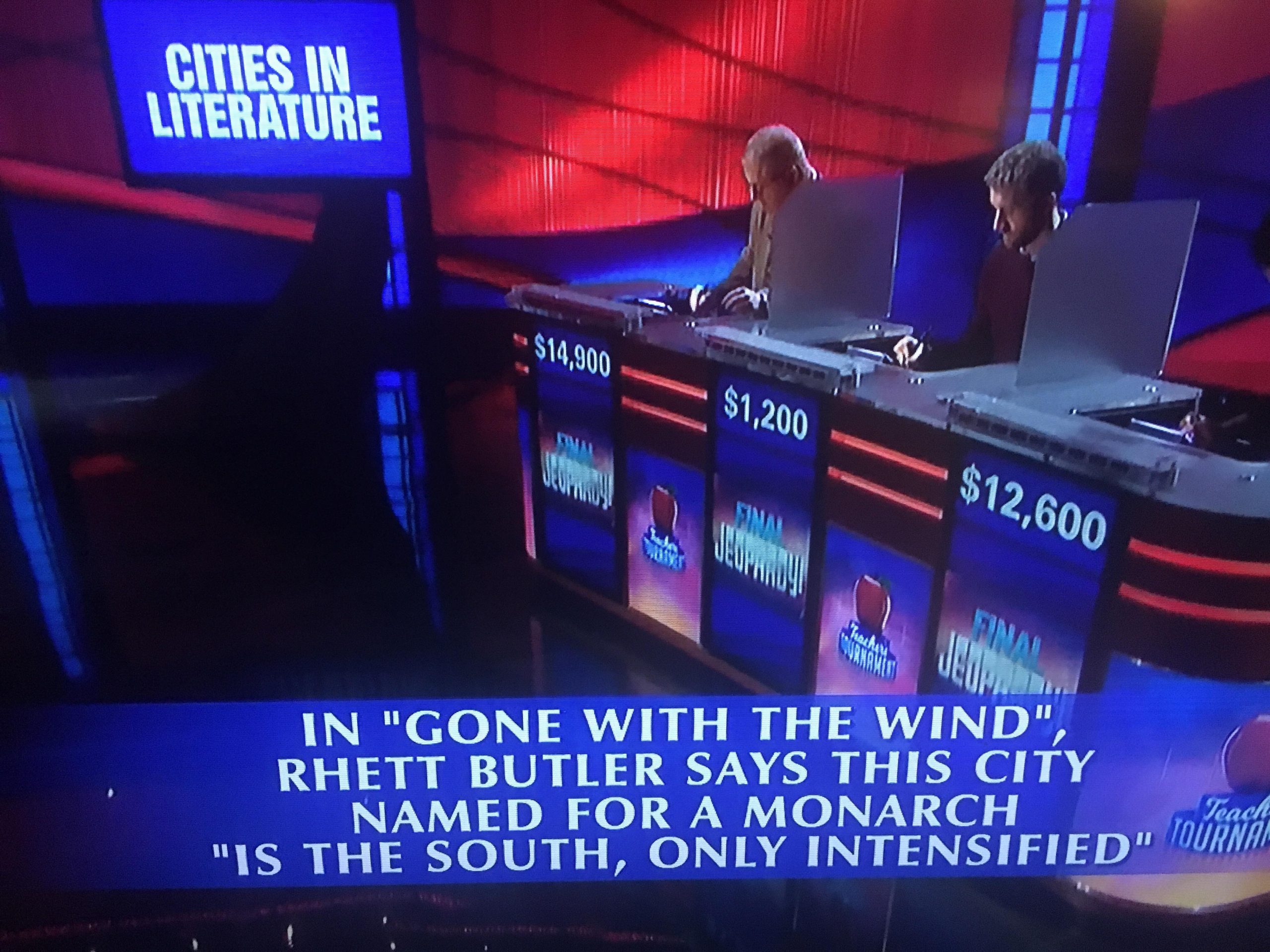

A recent example from the news comes from the shooting in Ferguson, Missouri. People who wanted to believe the cop’s side of the story could easily find witness testimonials, evidence, and justification for the cop. Those who wanted to believe Michael Brown’s side, could just as easily find witness testimonials, evidence, and justification for his side of the story. I have no idea what actually happened, and probably nobody ever will. But most people will see what they want to see and find evidence to back their version of the truth.

On the largest scale, people do this with their life situation, their lifelong religion, their marital status decision, their diet, their choice to have children or not, etc. Nobody likes to be wrong, especially in a decision as big as your family, your career, or your religion.

If I buy a shake weight to build my biceps, I’m going to read success stories (with little research about if they’re real or not), on how people used the shake weight to build their own biceps. Because here’s the thing: I don’t want to look like a fool, and I just spent $30 on a shake weight, gosh darn it. And if I’m wrong, I’ll by definition be a fool for buying it.

I’d rather be right, and as I hope you’re picking up on, it’s not hard to convince yourself that you are right, regardless of the illusive truth.

Turns out this is a proven phenomenon called the confirmation bias, “the tendency to search for, interpret, favor, and recall information in a way that confirms one’s beliefs or hypotheses while giving disproportionately less attention to information that contradicts it.” This bias leads to false memories, belief perseverance (holding on to things even in the face of strong evidence against them), illusory correlation (linking unrelated events together), and attitude polarization (two people in complete disagreement even in the face of the same pile of evidence). People tend to confirm their existing beliefs and to test ideas in one-sided ways in order to decrease the possibility of being wrong. You can read all about it in depth on Wikipedia.

A recent example from the news comes from the shooting in Ferguson, Missouri. People who wanted to believe the cop’s side of the story could easily find witness testimonials, evidence, and justification for the cop. Those who wanted to believe Michael Brown’s side, could just as easily find witness testimonials, evidence, and justification for his side of the story. I have no idea what actually happened, and probably nobody ever will. But most people will see what they want to see and find evidence to back their version of the truth.

On the largest scale, people do this with their life situation, their lifelong religion, their marital status decision, their diet, their choice to have children or not, etc. Nobody likes to be wrong, especially in a decision as big as your family, your career, or your religion.

And so, people associate with people just like them. Christians go to church with Christians and talk about how they’re all going to be saved together. Single people have single friends who confirm that the single life is the best life. Entrepreneurs go to weeklong start up events and bash people who have “real jobs” and talk about how entrepreneurs are the only ones living life to its fullest. We read about and “like” and follow people who agree with our stances and who share our longtime values. Because every time they say something we agree with, little endorphins fire in our brains and we get the wonderful feeling of affirmation. We get to know that we were right!

That’s the attitude we’re born with, and the one we need to fight. There’s no way that every political stance and religion and diet and career path can be right, but we’re programmed to pick something and then confirm the heck out of it. That makes truthvery illusive unless we actively accept that there are other good stances worth exploring. Maybe it’s worth it to subscribe to information from the opposing side, and to truly walk in others’ shoes.

Otherwise, you’ll keep being right for your whole entire life.

And so, people associate with people just like them. Christians go to church with Christians and talk about how they’re all going to be saved together. Single people have single friends who confirm that the single life is the best life. Entrepreneurs go to weeklong start up events and bash people who have “real jobs” and talk about how entrepreneurs are the only ones living life to its fullest. We read about and “like” and follow people who agree with our stances and who share our longtime values. Because every time they say something we agree with, little endorphins fire in our brains and we get the wonderful feeling of affirmation. We get to know that we were right!

That’s the attitude we’re born with, and the one we need to fight. There’s no way that every political stance and religion and diet and career path can be right, but we’re programmed to pick something and then confirm the heck out of it. That makes truthvery illusive unless we actively accept that there are other good stances worth exploring. Maybe it’s worth it to subscribe to information from the opposing side, and to truly walk in others’ shoes.

Otherwise, you’ll keep being right for your whole entire life.